Sam Altman fears a "Dead Internet": SEO impact and solutions

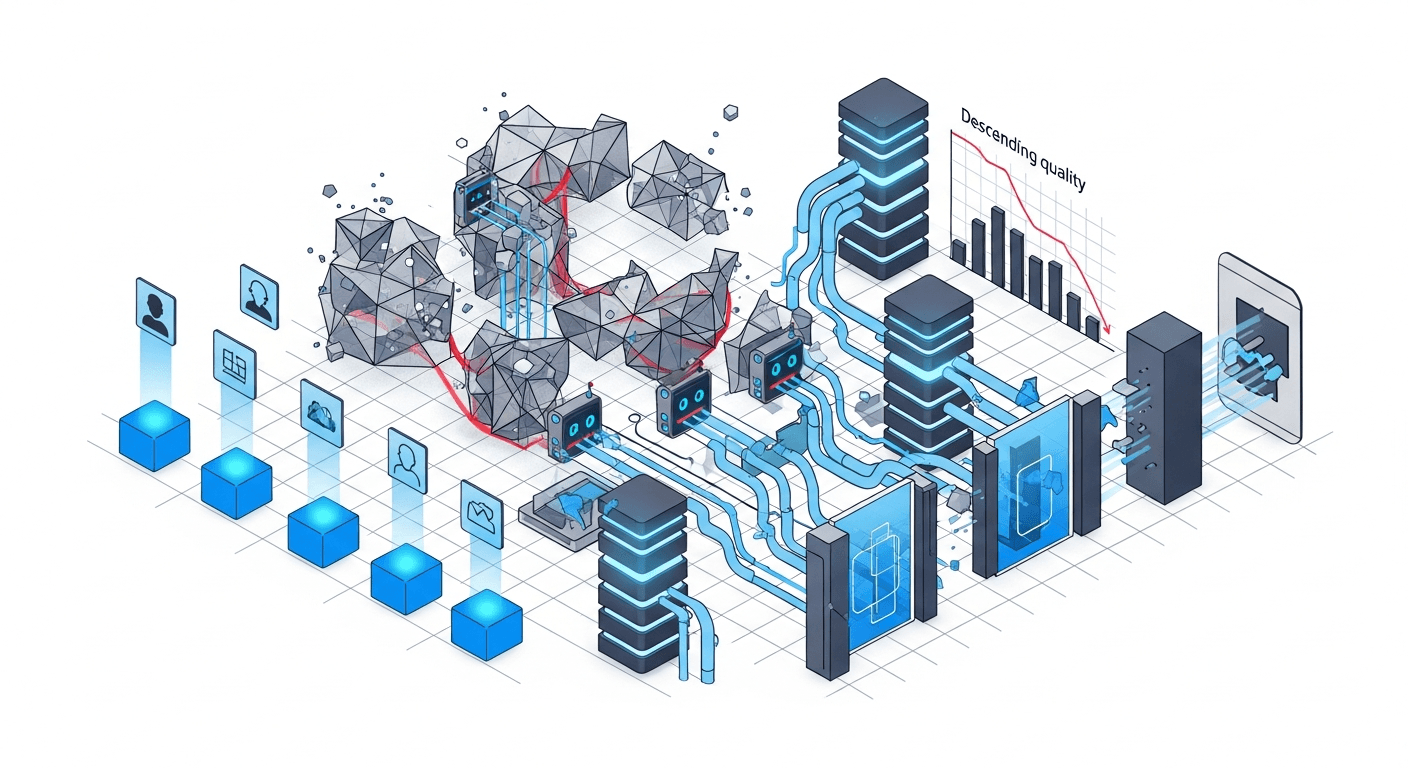

The OpenAI CEO warns of an Internet overwhelmed by AI. What are the consequences for your visibility and how can you adapt your SEO/GEO strategy?

Sam Altman, CEO of OpenAI, recently expressed a concern that should get the attention of every business leader: the "Dead Internet Theory" could become reality. This theory, long confined to conspiracy forums, predicts an Internet dominated by automatically generated content, where authentic human creations would become minorities, even invisible.

For SMEs and mid-market companies that depend on their online visibility, this perspective isn't abstract. It poses an immediate strategic question: how do you stand out in an ocean of synthetic content? How will search engines and generative AI distinguish your real expertise from the ambient noise?

This article analyzes the concrete implications of this evolution for your SEO and GEO strategy, and proposes actionable solutions for 2025 and beyond.

The Dead Internet Theory: from conspiracy to operational reality

The Dead Internet Theory emerged around 2016 on forums like 4chan. Its central thesis: a growing proportion of online content would be generated by bots, to the point that authentic human interactions would become rare. For years, this idea was considered paranoid.

In 2025, the data tells a different story. According to a study by Originality.ai, nearly 57% of high-visibility web content shows markers of AI generation. Amazon has removed thousands of automatically generated books. Social networks are fighting against increasingly sophisticated bot farms.

Why Sam Altman is sounding the alarm

The OpenAI CEO's concern is paradoxical: he leads the company that democratized content generation tools. But Altman sees the systemic problem. If ChatGPT and its competitors enable the production of millions of articles, comments, and posts every day, the systems' ability to identify valuable content erodes.

Altman stated that the speed at which AI can generate content now exceeds platforms' capacity to filter it. For businesses, this means one thing: content strategies based on volume are doomed.

Signals visible today

- Indexed content inflation: Google indexes billions of new pages each month, but the proportion of value-added content is decreasing.

- SERP degradation: search results for commercial queries are increasingly polluted by automatically generated affiliate sites.

- Growing user distrust: 63% of French internet users report doubting the authenticity of reviews and content they consult, according to a 2024 IFOP study.

Direct impact on traditional SEO: the rules are changing

SEO as practiced for the past 15 years relies on principles that AI pollution is challenging. Search engines are adapting their algorithms, and these adaptations have immediate consequences for your visibility.

Google strengthens detection of low-value AI content

Google's "Helpful Content" update, reinforced in March 2024, explicitly targets sites whose content appears produced primarily for SEO rather than for users. The analyzed signals include:

- Stylistic homogeneity across the entire site, typical of generated content

- Absence of unique perspective or demonstrable expertise

- The ratio between content and real engagement signals

- Consistency between author entity and subject matter

Sites affected by this update lost an average of 40 to 80% of their organic traffic. Recovery is long and uncertain.

The end of "sufficient content"

For years, the dominant SEO strategy consisted of producing "good enough" content on as many keywords as possible. Generative AI has made this approach obsolete. If anyone can produce a 1,500-word article on any topic in 30 seconds, the competition moves elsewhere.

At AISOS, we observe that companies maintaining their visibility are those that have invested in elements impossible to replicate by AI: proprietary data, documented client case studies, strong viewpoints assumed by identifiable experts.

E-E-A-T becomes non-negotiable

Google's E-E-A-T framework (Experience, Expertise, Authoritativeness, Trustworthiness) is no longer a bonus: it's the primary filter. Concrete criteria include:

- Experience: evidence that the author has direct practice of the subject (case studies, verifiable testimonials)

- Expertise: verifiable credentials, publications in the field, peer recognition

- Authoritativeness: citations by other reliable sources, quality backlinks

- Trustworthiness: transparency about company identity, legal notices, clear editorial policy

GEO and generative AI: a new battlefield

Traditional SEO concerns Google. But in 2025, a growing share of informational searches goes through ChatGPT, Perplexity, Google AI Overview, and Gemini. These systems have their own source selection criteria, and Internet pollution affects them differently.

How LLMs select their sources

Language models like GPT-4 or Claude don't function like traditional search engines. Their source selection relies on:

- Consistency with training corpus: sources regularly cited in reliable contexts are favored

- Entity clarity: LLMs better understand content where companies, people, and concepts are explicitly named and described

- Verifiable timeliness: for recent queries, RAG (Retrieval-Augmented Generation) systems favor dated and sourced content

- Logical structure: content organized in autonomous and coherent sections is more easily extracted and cited

The risk of dilution in noise

If the Internet fills with AI content that paraphrases the same information, LLMs will struggle to identify original sources. A company that produces an original study risks seeing its conclusions adopted by hundreds of generated sites, to the point where the LLM no longer knows how to attribute the information to its source.

This "information laundering" phenomenon is already observable. Innovative SMEs see their insights reprised without attribution by automated content sites, then cited by AI as coming from generic sources.

Perplexity and the primary source premium

Perplexity AI distinguishes itself through its explicit citation system. Each response includes the sources used. This model values content that positions itself as primary sources: original studies, exclusive data, direct testimonials. Content aggregators are systematically downgraded in favor of original information producers.

Concrete solutions: standing out in a saturated Internet

Faced with this context, business leaders must rethink their content strategy. Here are the actionable levers identified by GEO practitioners.

Invest in "impossible to generate" content

Generative AI excels at synthesis and reformulation. It fails on what requires access to the real world:

- Detailed client case studies with verifiable figures, company names, specific context

- Proprietary data from your activity (sector benchmarks, portfolio analyses, observed trends)

- Interviews and testimonials from identifiable employees, clients, partners

- Project experience feedback with methodology, obstacles encountered, measured results

These contents are expensive to produce but impossible to replicate. They become durable strategic assets.

Structure for LLMs: the "citation-ready" format

To maximize your chances of being cited by ChatGPT, Perplexity, or Gemini, adopt a structure that facilitates extraction:

- Each section must be self-sufficient: understandable without reading the rest of the article

- Key statements must be explicit: "The average conversion rate in industrial B2B is 2.3%" rather than vague formulations

- Entities must be clearly named: "AISOS, a Paris-based agency specialized in GEO" rather than "our company"

- Sources and dates must be mentioned: LLMs favor dated and attributable information

Strengthen your brand's entity identity

Search engines and LLMs rely on "knowledge graphs" to understand relationships between entities. A company well represented in these graphs will be more easily identified as a reliable source.

Concrete actions:

- Create or enrich your Wikidata page with verifiable information

- Ensure information consistency across all your profiles (LinkedIn company, Crunchbase, sector directories)

- Obtain mentions in reliable third-party sources (specialized press, sector studies, academic publications)

- Implement Schema.org markup on your site (Organization, Person, Article, FAQ)

Develop a documented "thought leadership" strategy

Your company's leaders and experts are underexploited assets. An original viewpoint, documented and assumed by an identifiable person has more value than a generic brand article.

- Publish signed analyses by your experts on LinkedIn and your blog

- Participate in sector podcasts and webinars where your insights will be cited

- Write opinion pieces in specialized press in your sector

- Document your positions on your market's evolutions

Operational checklist for 2025-2026

Here's a synthesis of priority actions to adapt your visibility strategy to the context of an Internet saturated with generated content.

Immediate audit (Q1 2025)

- Analyze the share of your organic traffic coming from informational vs transactional queries

- Identify your content most cited by LLMs (tools like Perplexity to test your target queries)

- Evaluate your presence in knowledge graphs (Google search of your brand, Wikidata verification)

- Audit your existing content structure for LLM compatibility

Content strategy (Q2-Q3 2025)

- Reduce generic content volume in favor of high-value-added content

- Launch a client case study documentation program

- Create a "thought leadership" publication calendar for your experts

- Develop content based on your proprietary data

Technical optimization (ongoing)

- Implement Schema.org markup across the entire site

- Ensure entity information consistency across all channels

- Optimize article structure for LLM extraction

- Set up monitoring of your citations in AI responses

Conclusion: authenticity as competitive advantage

Sam Altman's fears about the Dead Internet Theory are not a distant dystopia. They describe an evolution already underway that impacts business visibility. But this evolution also creates an opportunity for organizations that invest in authenticity.

In an Internet saturated with generated content, signals of real expertise, documented experience, and verifiable entity become powerful differentiators. Companies adopting these practices now are building a durable advantage.

AISOS audits reveal that SMEs and mid-market companies often have underexploited assets: client data, experience feedback, sector expertise. Transforming these assets into structured content for SEO and GEO is the priority project for the next 18 months.

The question is no longer about producing more content. It's about producing content that neither AI nor your competitors can replicate. That's where your visibility in tomorrow's Internet is at stake.